Microsoft proposes a Phone which could predict touch

Touch screens have been around for a long time. Initial examples were not very sophisticated and expensive to manufacture. Elographics built the first touch screen with a translucent surface in 1974. The first computer with touch screen elements was the HP-150 in 1983, but it was not a success. In 1993, Apple released the Newton PDA and IBM the smartphone called Simon with limited touchscreen features.

The major breakthrough came from Apple when they released their new touch screen smartphone called the iPhone on June 29, 2007. Today pretty much every phone manufacturer delivers a touch screen phone and the next development in touchscreen technology seemed to be Apple with its ForceTouch which measures the pressure applied on the screen.

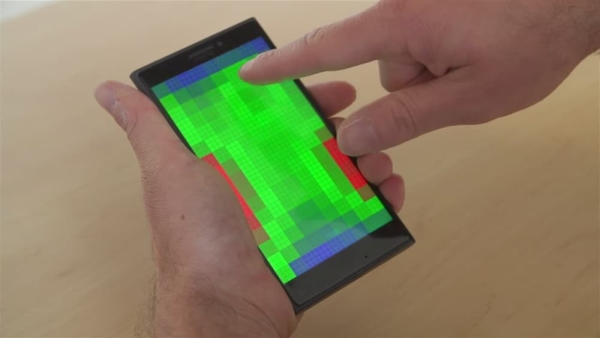

Microsoft has stirred the market with a research project announced in April called Pre-Touch Sensing for Mobile Interaction. Ken Hinckley, a principal researcher at Microsoft who led the project, said the research is based on a whole different philosophy of interaction design. The research uses the phone’s ability to sense how you are gripping the device as well as when and where the fingers are approaching it.

Pre-touch sensing effectively allows the smartphone interface to be turned off until it detects a finger approaching the screen. The term used for this action is called a “nick of time” user interface which could, for example, hide the player controls on a video until they are needed. The technology starts approaching artificial intelligence when you realise that because the smartphone can detect how it is being held, it could also determine which hand a particular finger belongs to. So, if you were using the phone one-handed, pre-touch sensing could deliver a different interface than if you were holding it with two hands—allowing you to easily scrub through a video with just your thumb, or offering a different keyboard depending on what fingers you have available.

The technology offers many possible improvements to the way we use our mobile devices. It should be possible to have much better precision when tapping small on-screen elements. For example, if you’re reading a webpage in your mobile browser, the UI could highlight the link you’re trying to tap before you even tap it. It would also give mobile users the equivalent of a right-click. You could tap a file or icon with one finger, then hover your thumb over the screen to select between options in a contextual menu.

Although this development is still at the research stage, it offers very exciting possibilities for innovative development if existing technologies. But like all of Microsoft Research’s projects, there’s no telling whether or not a smartphone with pre-touch sensing will ever come out of the prototype phase—especially as Microsoft winds down its Nokia smartphone business.

How innovative do you think this technology is? And will it change the way we interact with our phones forever?

Recent Comments